Hessian-Free Online Certified Unlearningedit

ICLR 2025 paper on efficient Hessian-free certified machine unlearning.

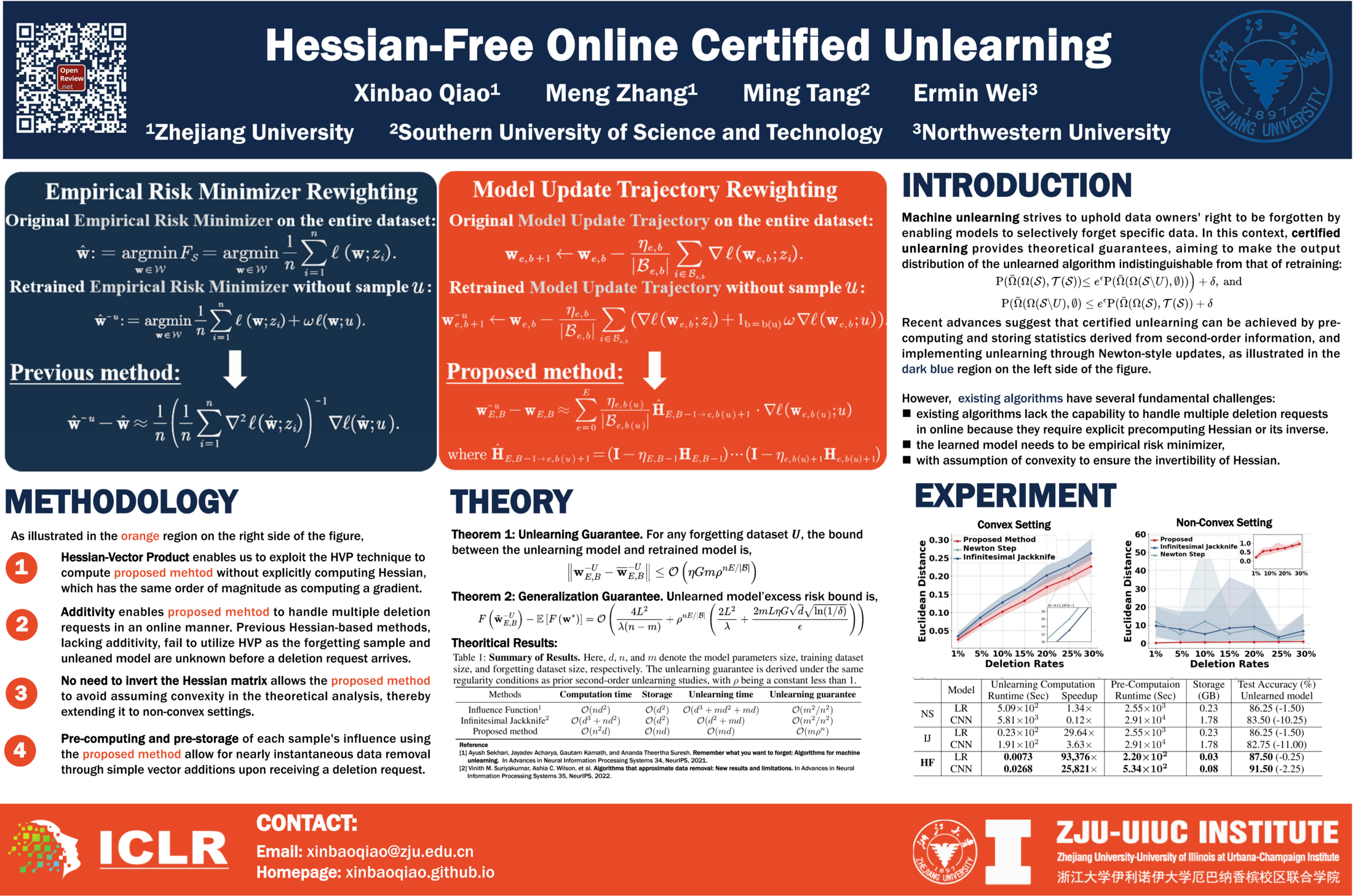

Hessian-Free Online Certified Unlearning is an ICLR 2025 conference paper by Xinbao Qiao, Meng Zhang, Ming Tang, and Ermin Wei. The paper develops a certified unlearning procedure for models where explicit Hessian storage or inversion would be too costly.

Overviewedit

The paper studies certified data removal for models that cannot afford explicit Hessian construction, Hessian inversion, or a strict convex empirical-risk-minimizer assumption. Earlier certified-unlearning methods often use Newton-style corrections from stored second-order statistics, but those matrix operations become impractical for high-dimensional and over-parameterized models.

The paper's central move is to treat training as a trajectory rather than only a final optimizer. It records per-sample trajectory statistics that approximate how the learned model would have changed if a sample had been absent during stochastic training.

Methodedit

The method recollects an approximator for each training point through affine stochastic recursion. The recursion tracks the discrepancy between the model trained on the full dataset and the counterfactual model retrained without a requested sample. Because the update can be computed through Hessian-vector products, the algorithm avoids materializing the full Hessian matrix while retaining a certificate-style approximation guarantee.

Once the recollected vectors have been computed, online deletion becomes additive: a batch of deletion requests is handled by summing the stored per-sample approximators and applying a vector update to the current model.

Key formulaedit

The page uses a compact notation for the core update. Here denotes the recollected trajectory approximator for deleting sample , and denotes the accumulated Hessian-vector-product operator along the later training trajectory.

The stored approximator has the form:

For a deletion set , the online unlearning update is additive:

The additivity result is the operational reason the online stage is cheap: after precomputation, a deletion request does not require solving a new linear system or inverting a Hessian.

Resultsedit

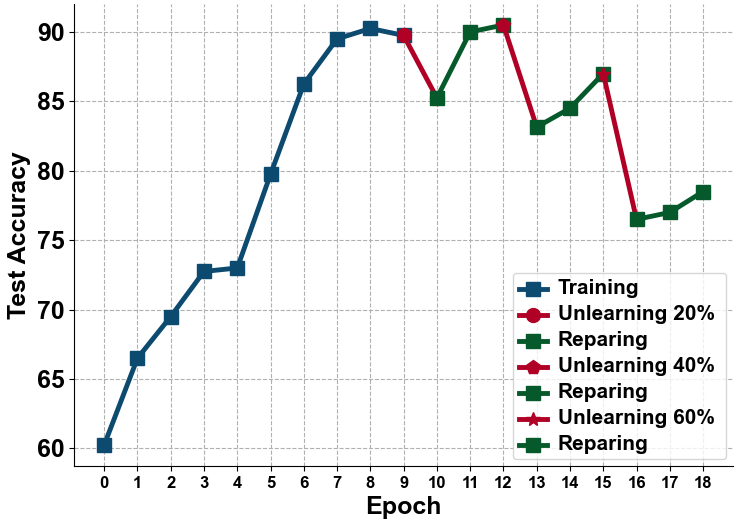

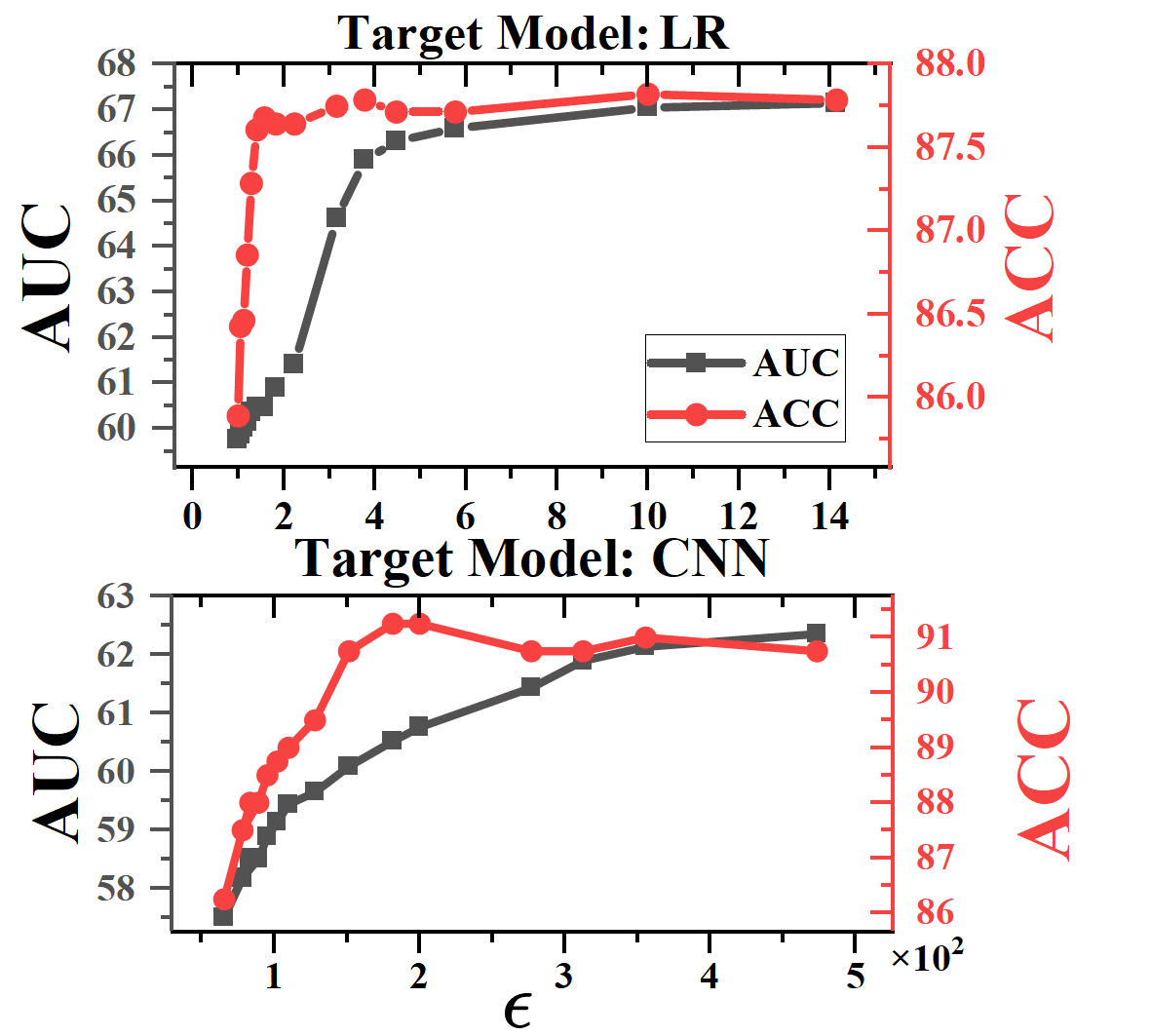

The paper reports millisecond-level unlearning execution and orders-of-magnitude lower time and storage costs than Hessian-based certified-unlearning baselines. In large-scale application experiments, the method removes a sample through vector additions while preserving test accuracy close to retraining.

The experiments also include membership-inference analysis. The reported trade-off is that certified unlearning should be evaluated not only for approximation-to-retraining and utility, but also for privacy leakage under repeated model releases.

Placementedit

This work belongs to Machine Unlearning, Certified Data Removal, and Trustworthy AI. Within Qiao's publication record, it is the differentiable-model counterpart to DynFrs: An Efficient Framework for Machine Unlearning in Random Forest, which studies exact unlearning for tree ensembles.